The rev.ng decompiler goes open source + start of the UI closed beta

Announcement

Hi there! Long time no see! It has been a while since our last update about rev.ng, but we've been quietly working hard. So, it's time for some news! 🚀

tl;dr In this blog post we:

- Announce the open sourcing of the rev.ng decompiler, the start of the UI closed beta, the release of the new website, rev.ng Hub and the docs. Also, we do private demos.

- Explain how to try rev.ng.

- Explain the design and goals of rev.ng as a whole.

- Explain the relation between the open source project, the UI running in the cloud and the standalone UI.

- Present our roadmap towards the 1.0 release.

- Describe how to interact with us (i.e., X/Twitter, Discord, Newsletter, Discourse).

1. What happens today?

Quite a lot.

- We are open sourcing

revng-c, the backend of our decompiler.

As of today, the whole decompilation engine is open source!

We're also releasing initial documentation on how to use rev.ng. - We'll soon invite newsletter subscribers to the closed beta for the rev.ng UI.

We're going to invite people on FIFO basis, make sure you're registered. - We're releasing the new website. You are looking at it.

- We're publishing rev.ng Hub, the entrypoint to use the cloud version of rev.ng.

Beta users will be able to create projects, run the UI in the browser and collaborate with other users without installing anything.

If you're not part of the closed beta yet, you can still explore existing public projects. - We're starting to look for people curious about getting a private demo of the rev.ng capabilities (interested?).

2. How to try rev.ng

Very briefly, let's install revng.

No root required, everything will fit into a single directory that you can discard at any time.

OK, let's now decompile a simple program. Consider example.c:

You can compile and decompile it:

You should obtain:

Alternatively, take a look at the documentation. In particular, make sure you correctly set up a working environment, follow the tutorial to create a model from scratch or try directly the analyses tutorial, which will show you how to decompile a program in a single invocation.

To try the UI, register to our newsletter. As mentioned before, we'll soon start inviting people in small batches.

Note that the goal of this release is to demo the UI and the decompilation results on some binaries on which we get interesting results, let people work on some real world binaries and then let people try whatever they like and get feedback, bug reports and so on.

In particular, here are some non-trivial binaries (with their .text size) that we use for testing: hostname (2K), ntfscat (4K), umount (8K), chroot (15K), nc.openbsd (15K), apt-get (18K), df (45K), parted (38K), gzip (54K), updatedb.plocate (56K), resolvectl (61K), ps (47K).

rev.ng supports many ABIs and platforms. However, at this stage, we performed initial QA on Linux x86-64 binaries. rev.ng will evolve significantly during the closed beta period, so make sure you follow our X/Twitter account and are subscribed to our monthly newsletter to know when it's time to come back to try out some new features!

If you have problems, head to Discourse for help.

3. Our goals

This is a good time for a refresher about what the rev.ng decompiler is about. In brief, we focus on:

- automatic recovery of data structures;

- a modern UX;

- collaborative reversing;

- wide platform support;

- extensibility.

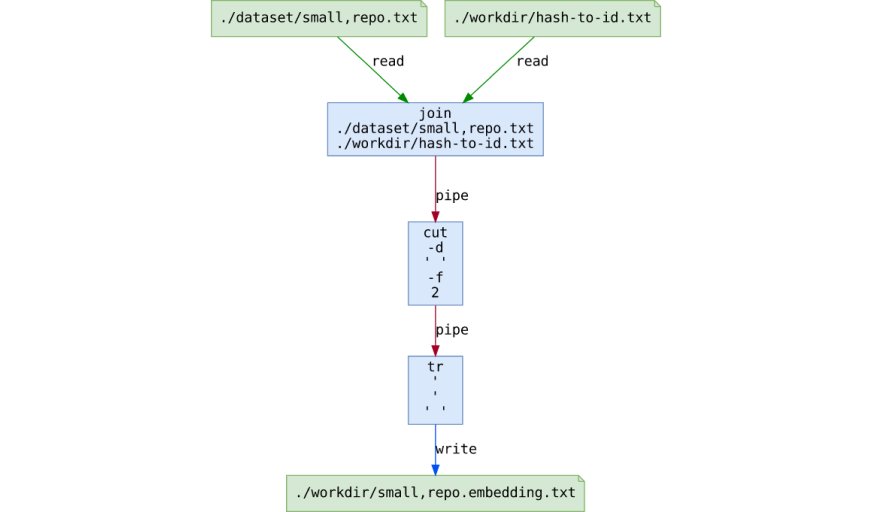

Automatic data structures recovery

Thanks to Data Layout Analysis, we can automatically recover the layout of structs interprocedurally.

Consider the following struct, a node in a linked list with an array of 5 integers:

A function summing the elements of the array:

And a function traversing the linked list:

rev.ng produces the following output out of the box (except for the comments):

Pretty nice, isn't it? You can also directly on Hub

Bonus: we emit syntactically valid C code.

Status as of today: available.

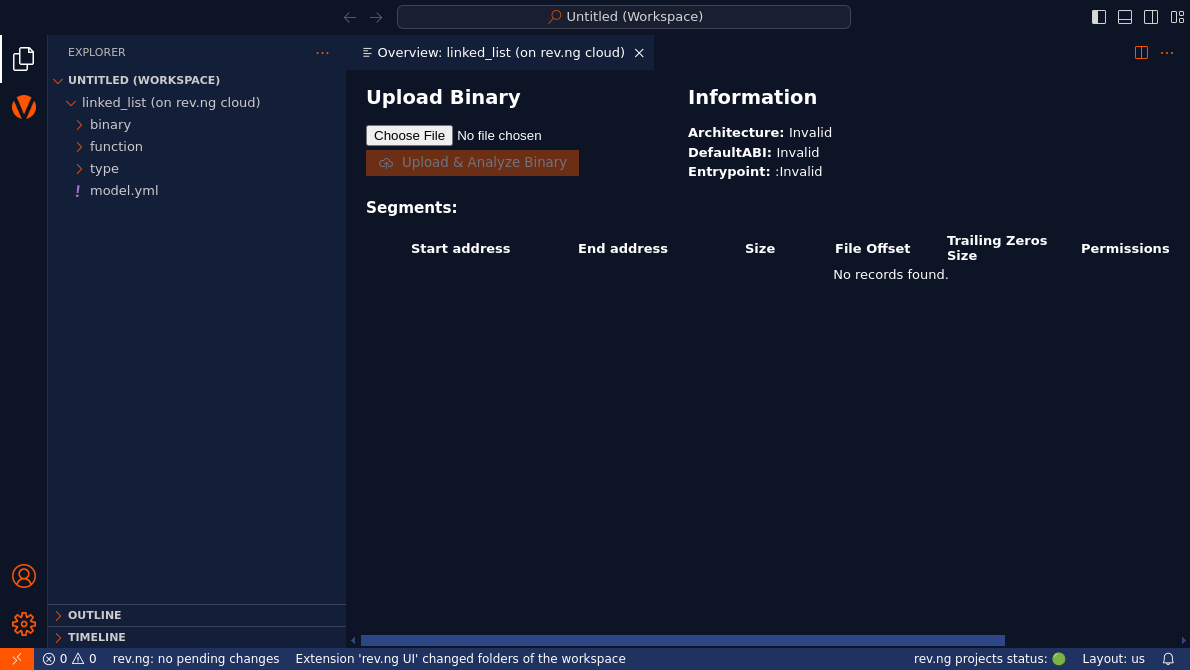

A modern UX

Our UI is based on VSCode. If you use VSCode, you already know how to use it. Also, the UI can both run in a browser tab or as a standalone application.

Shortcuts:

Ctrl + Click, navigates to the definition of the function/type under the cursor.Nrenames it.Ylet's you edit its type.Xshows references.

rev.ng is not just a decompiler, it's an interactive decompiler. This means that you can make changes interactively and only the things that are affected by your change will be recomputed, just like you would expect.

Status as of today: the UI is available for participants in the closed beta.

Collaborative reversing

The rev.ng UI has a client-server architecture.

Multiple users can connect to the same daemon instance and work at the same time on the same project. If you use the cloud version, you also get a GitHub-like application to manage projects, the rev.ng Hub.

Status as of today: works, needs some love to improve the user experience (roadmap item #797).

Wide architecture support

In order to understand why we can easily support many architectures, let's briefly digress on compilers and emulators.

Compiler are usually organized in frontend, mid-end and backend. The frontend handles details specific to the input language. The mid-end performs optimizations on an intermediate representation (IR) that is independent of both the input language and the target architecture. Finally, the backend performs architecture-specific optimizations and outputs executable code.

Let's consider a part of the LLVM ecosystem:

LLVM IR is LLVM's intermediate representation.

Let's now consider QEMU, a well-known emulator. When running in emulation mode, as opposed to virtualization mode (e.g., KVM), works in a similar fashion. It has its own frontends, its own IR (tiny code) and multiple backends. The main difference relies in the fact that the input is not C or C++ but ARM or x86-64 executable code:

This type of emulation is also known as dynamic binary translation.

Now, rev.ng works like this:

Basically we use the first half of the QEMU pipeline to lift executable code to tiny code, convert it to LLVM IR and then proceed with the decompilation process.

This means we can support any architecture supported by QEMU with relative ease.

However, architecture support is just part of the story. The other big topic is how well a certain platform or, more specifically, an ABI, is supported. In this regard, we put a lot of effort to be able to describe in a declarative way all the properties of an ABI. Our ABI description format is general enough that supporting new ABIs will be quite easy.

Status as of today: we support x86, x86-64, ARM, AArch64, MIPS, and s390x to various degrees of maturity.

In terms of binary formats, we support ELF, PE/COFF and Mach-O.

We can also import from .idb, DWARF debug info and PDB debug info.

We performed most QA on Linux x86-64 binaries, all the rest needs additional QA.

If you have a specific interest in some platform (and a budget), we're happy to talk :).

Track the roadmap item #58 for further QA on more platforms.

Extensibility

rev.ng aspires to become the go-to framework for reverse engineering tools. For this reason, the whole project is open source, with the exception of our interactive UI.

rev.ng is also easily scriptable. Our project file, the model, is just a YAML document. Here's an example with some comments.

The docs offers a step-by-step tutorial to understand the model above.

Basically, if you can parse JSON or YAML, you can script rev.ng.

We plan to offer easy-to-use wrappers for Python and TypeScript to easily make changes and obtain artifacts (e.g., decompiled code or disassembly).

Example usage in Python:

Example usage in TypeScript:

Finally, since we use extensively use LLVM IR as our main internal representation, you can exploit many many tool in its ecosystem. For instance, you could use KLEE to perform symbolic execution or SanitizerCoverage + libFuzzer (or other tools) to perform coverage-guided fuzzing. Also, since the output of our decompiler is valid C, we've also been able to play around with clang static analyzer to look for common vulnerabilities directly on the decompiled code. But this probably deserves a blog post on its own.

Status as of today: we have Python and TypeScript wrappers to manipulate the model, but not an easy way to run analyses and fetch artifacts. Roadmap item #17 tracks building a full fledged Python client.

4. Open source vs free-to-use vs premium

For a quick comparison of the various components of rev.ng take a look at the feature comparison page.

Very briefly:

- The rev.ng framework is fully open source. You can decompile anything you want from the CLI.

- The UI will be available in the following forms:

- free to use in the cloud for public projects;

- available through a subscription in the cloud for private projects;

- available at a cost as a fully standalone, fully offline application.

rev.ng in the cloud?

Yes.

- You can create a project through rev.ng Hub and invite collaborators.

- The UI runs in the browser.

- The backend runs in our cloud.

Here's the deal:

- Public projects are free. If you're OK with having your project file public, you can use the cloud version of rev.ng for free, UI included.

- Private projects require a subscription.

We're also willing to discuss installing a private instance of the rev.ng cloud service on premise, if you're into kubernetes. Interested?

As mentioned above, we will also offer a fully standalone version of the UI for which you can buy a regular license and run it wherever you prefer.

5. The roadmap

rev.ng is a complex project and getting to the 1.0 version is going to take quite some effort. But don't worry, we have a detailed roadmap on how to get there.

The roadmap is divided into 4 tiers:

- Tier 1 (done): alpha version, demo to friends.

- Tier 2 (starts today): beta version, give access to the cloud version to newsletter subscribers.

- Tier 3 (to come): open beta.

- Tier 4 (to come): 1.0 release.

Want to know more? Check out the detailed roadmap page.

6. How to get in touch and stay up to date

We publish and can be contacted in several ways:

- X/Twitter: frequent news about development and major announcements.

- Discord: random chat and real-time discussions with the dev team.

- Discourse: a forum for users to get support and report bugs.

- GitHub: where all our open source development takes place, please open an issue if you're trying to extend rev.ng.

- Monthly newsletter: subscribe to participate in the closed beta and get monthly news about the project advancements.

- E-mail: want some privacy? Drop us an e-mail.

That's all for today. Stay tuned for more! 🚀